Amlan Kar

<first_name>@cs.toronto.edu

University of Toronto

Biography

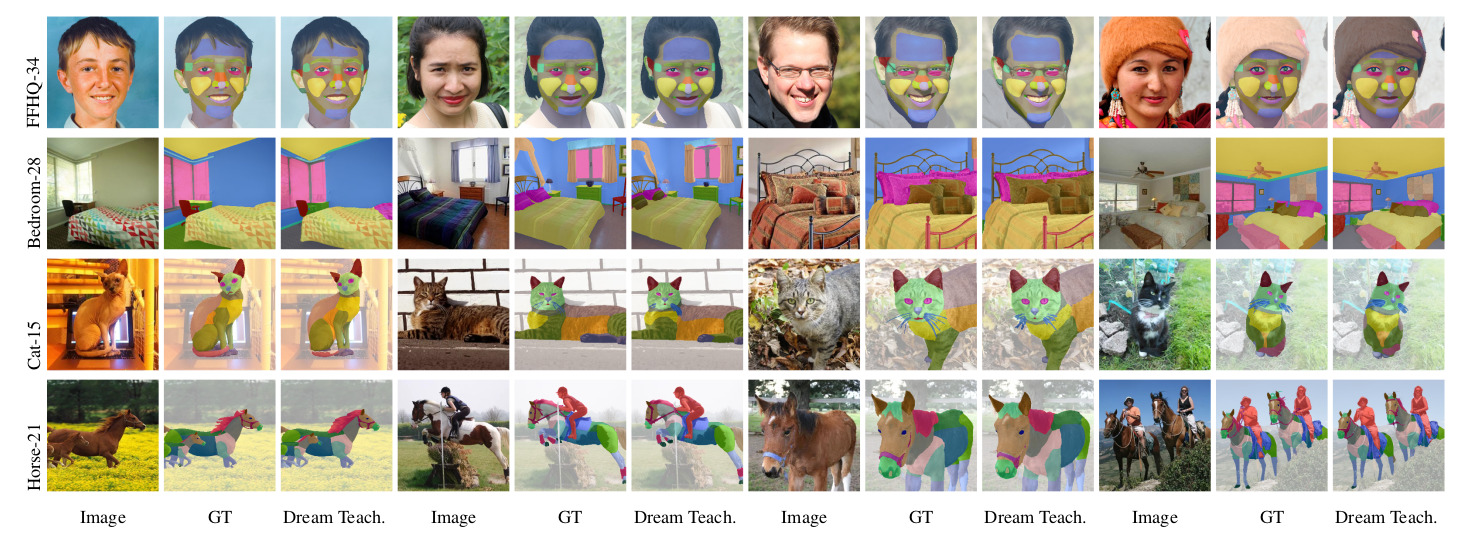

I’m a Senior Research Scientist at NVIDIA Research in the Toronto AI Lab and a PhD student at the University of Toronto advised by Prof. Sanja Fidler. I am broadly interested in generative models, with a soft spot for creative 3D tasks, and in data for Computer Vision problems, particularly in how data can be collected and labelled quicker through AI-assistance, or generated automatically. I graduated from IIT Kanpur, India in 2017 with a bachelor’s degree where I was fortunate to get to work with Prof. Gaurav Sharma and earlier with Prof. Amitabha Mukerjee. I have interned with the research team at Fyusion Inc. and with Prof. Raquel Urtasun and Prof. Sanja Fidler at the University of Toronto during undergrad.